The Problem With Most Engineering Metrics

If I ask you what your team's velocity is, you can probably tell me. If I ask whether that velocity is high or low relative to what's possible, you'll likely shrug.

Velocity points are a classic example of a metric that's easy to measure and almost impossible to act on. They tell you *that* work is moving, not *where* it's getting stuck or why.

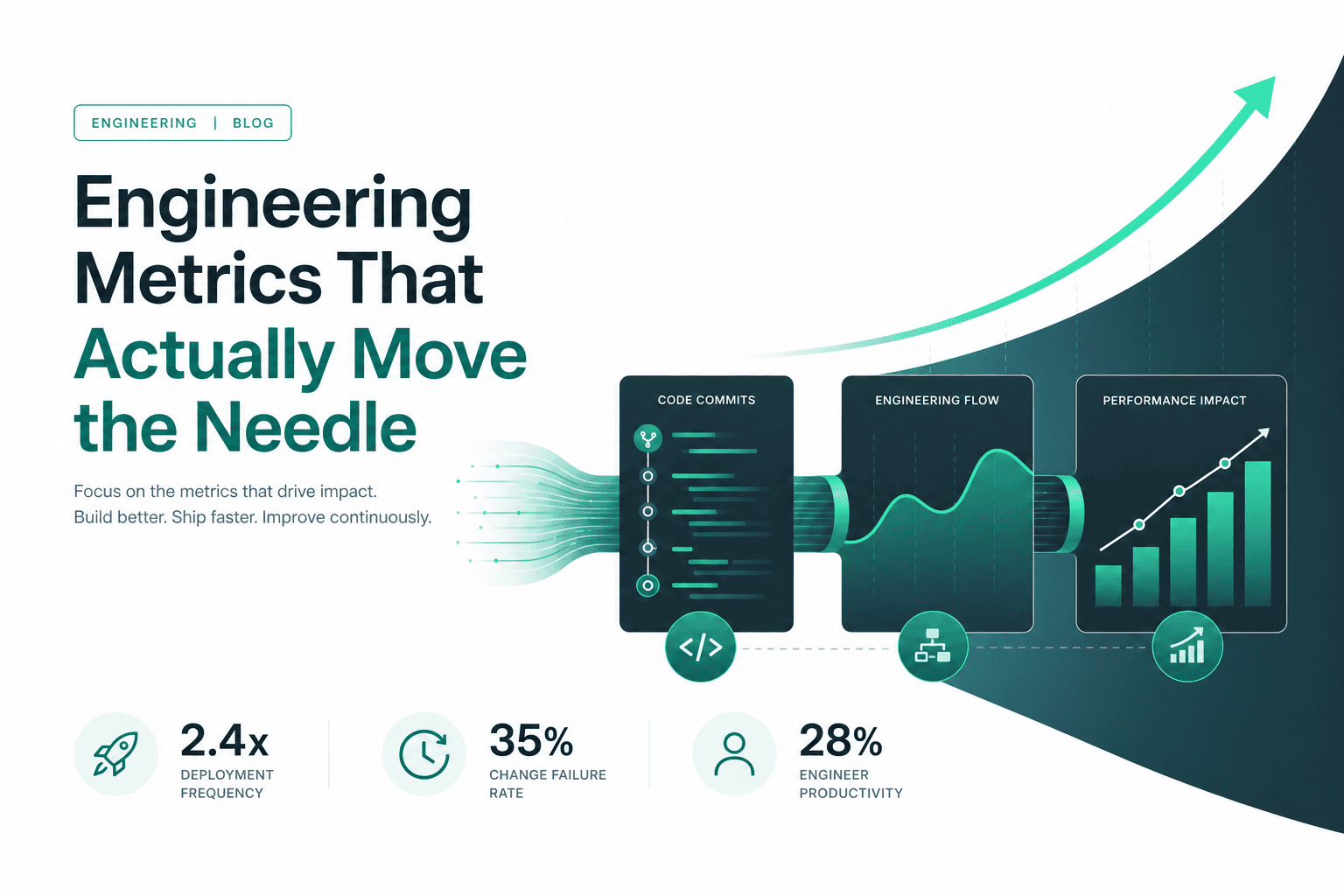

The Four Metrics That Matter

The DORA research team spent years studying what separates high-performing engineering organizations from the rest. Their findings converged on four metrics:

1. Deployment Frequency

How often does your team deploy to production? High performers deploy multiple times per day. Low performers deploy once a month or less.

This is a proxy for batch size, feedback loop length, and organizational trust in the engineering process.

2. Lead Time for Changes

From the moment a developer commits code to when it's in production, how long does that take? Elite organizations measure this in hours. Most teams measure it in days or weeks.

Long lead times usually mean: large PRs, slow review culture, or a complex deployment process.

3. Change Failure Rate

What percentage of deployments cause a production incident or require a hotfix? High performers keep this below 15%.

Chasing speed without quality shows up here immediately.

4. Mean Time to Restore

When something goes wrong (and it will), how quickly can you recover? This measures your team's resilience, not just their quality.

How to Actually Use These

The mistake most teams make is measuring these and then... discussing them in a quarterly review. That's too slow.

The goal is to make these metrics ambient — visible, real-time, and connected to daily work.

When a PR has been open for 5 days, that's a lead time signal. When deployment frequency drops below your weekly baseline, something changed in your process. When change failure rate spikes, your test coverage story is probably broken.

What These Metrics Don't Tell You

DORA metrics are outcome metrics. They tell you the health of your delivery pipeline, but not why a specific feature is delayed or which developer is overloaded.

For that, you need process metrics: task age by stage, reviewer distribution, focus time ratios, meeting-to-coding time ratios.

The best teams use both layers: DORA for the "is the system healthy?" question, and process metrics for the "what specifically should we improve this week?" question.

Starting Point

Pick one DORA metric. Measure it for 30 days without changing anything. Let the baseline tell you where you are. Then make one change — reduce PR size, add deployment automation, clarify incident runbooks — and watch the metric respond.

The goal isn't a perfect dashboard. It's a team that knows what to improve next.